The rapid evolution of artificial intelligence has officially entered a transformative new chapter as we move through 2025 and into 2026. For the past few years, the world was captivated by generative AI models that could write essays, generate images, and answer basic queries with impressive speed. However, these tools remained largely passive, sitting idly until a human provided a specific prompt to trigger a response. We are now shifting away from this reactive model toward the era of “Agentic AI,” where digital systems act with a level of autonomy previously confined to science fiction.

These are no longer just chatbots; they are sophisticated digital agents capable of setting goals, planning complex workflows, and executing tasks without constant human hand-holding. This shift represents the move from “AI as a tool” to “AI as a coworker,” fundamentally changing how businesses operate and how individuals manage their daily lives. As these agents gain the ability to use software, interact with other APIs, and correct their own mistakes, the boundaries of productivity are being pushed further than ever before. This article will provide an exhaustive exploration of the technology behind Agentic AI, its impact on the modern workforce, and the future where autonomous agents become our primary collaborators.

A. Defining the Agentic Shift: Tool vs. Agent

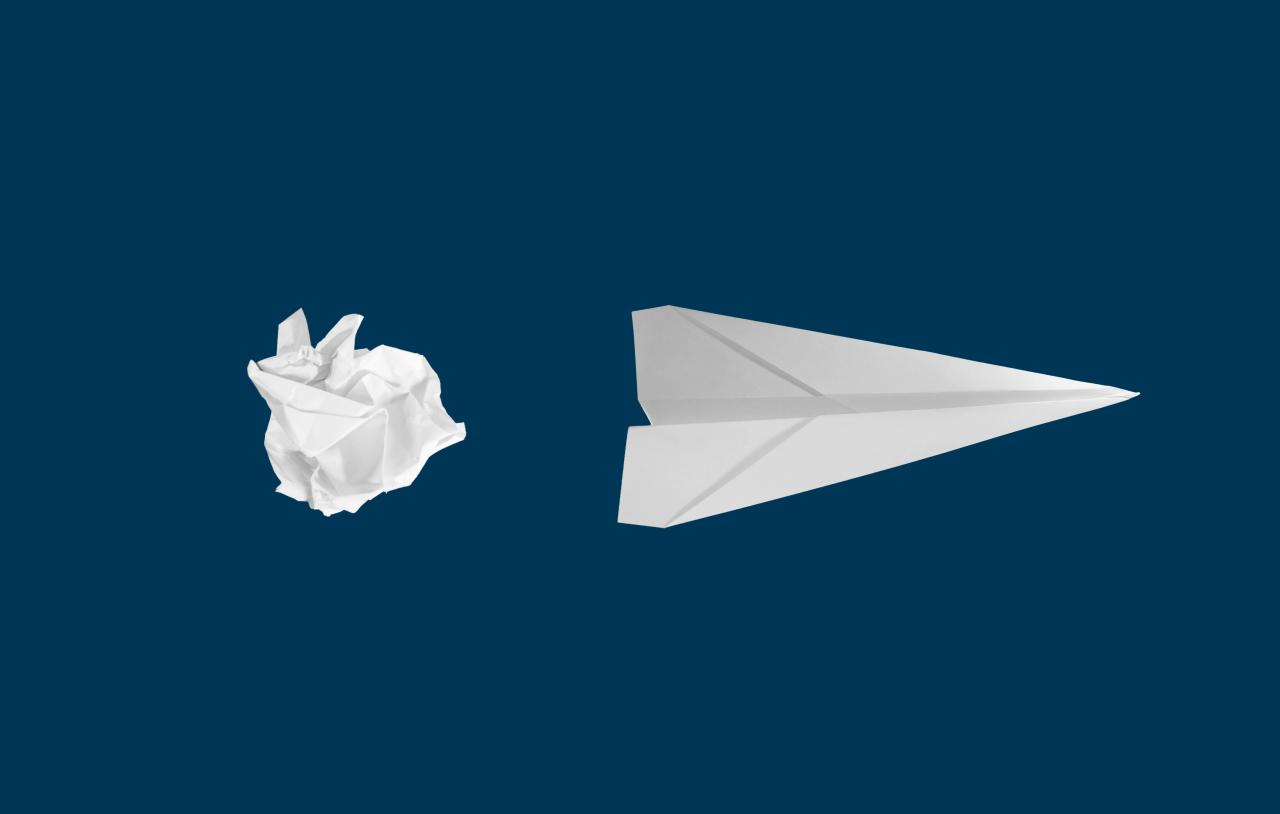

To understand the magnitude of this change, we must distinguish between the “Passive AI” we have used and the “Agentic AI” that is currently arriving. A passive AI is like a calculator; it performs a function only when you press the buttons.

An Agentic AI, however, is more like a junior employee who understands a high-level objective and determines the steps necessary to achieve it. It possesses “agency,” meaning it can make choices based on the context of the situation.

A. Traditional AI models require step-by-step instructions for every part of a task to be successful.

B. Agentic systems use “Chain of Thought” reasoning to break a large goal into smaller, manageable sub-tasks.

C. Agents can access external tools, such as web browsers, code compilers, and internal databases, to gather information.

D. Self-correction is a hallmark of agency, where the AI recognizes a failed attempt and tries a different approach.

E. The shift allows humans to move from being “Doers” to being “Directors,” overseeing a fleet of autonomous digital workers.

B. The Architecture of Autonomy: How Agents Think

The “brain” of an Agentic AI is typically a Large Language Model (LLM), but it is wrapped in an architecture that provides memory and planning capabilities. Without these layers, the AI would simply “forget” what it was doing from one step to the next.

Engineers use “ReAct” patterns (Reasoning and Acting) to allow the model to think about what it needs to do, take an action, and then observe the result before moving on. This feedback loop is what creates the appearance of intelligent autonomy.

A. Short-term memory is managed through the “Context Window,” allowing the agent to keep track of the current task status.

B. Long-term memory involves “Vector Databases” that allow the agent to recall information from past projects or interactions.

C. Planning modules use algorithms to rank potential actions based on their likelihood of reaching the final goal.

D. Tool-use integration allows the agent to generate and execute code or call APIs to interact with the physical and digital world.

E. Reflection mechanisms force the agent to “critique” its own plan before execution to minimize errors and hallucinations.

C. From Assistants to Autonomous Project Managers

In the early days of AI assistants, you might ask for a summary of a meeting. In the Agentic era, you tell the AI to “organize a marketing campaign for a new product launch.”

The agent will then research competitors, draft a social media schedule, contact influencers via email, and report back with a completed spreadsheet. It manages the project lifecycle from start to finish, only asking for human approval at critical junctions.

A. Agents can operate “asynchronously,” meaning they work in the background while the human user is focused on other tasks.

B. Multi-agent systems involve different AIs talking to each other, with one acting as a “Researcher” and another as an “Editor.”

C. Integration with enterprise software like Slack, Jira, and Salesforce allows agents to move data between departments seamlessly.

D. Project-specific agents can be “cloned,” allowing a business to scale its operations without a linear increase in human headcount.

E. Agents provide 24/7 monitoring, identifying potential project delays and suggesting fixes before they become major problems.

D. The Impact on White-Collar Professional Services

Legal, financial, and administrative sectors are seeing the most immediate impact from Agentic AI. These fields are data-heavy and rely on complex workflows that agents are perfectly suited to navigate.

An autonomous legal agent can perform a “Discovery” phase by scanning thousands of documents for specific keywords and then drafting a legal brief based on the findings. This reduces the time spent on “grunt work” from weeks to minutes.

A. Accounting agents can perform real-time audits, flagging suspicious transactions the moment they occur in the ledger.

B. Legal agents can stay updated on changing regulations across multiple jurisdictions, ensuring constant compliance.

C. Data analysts are being replaced by “Analytic Agents” that don’t just find data but interpret its strategic meaning.

D. Human Resources agents can handle initial candidate screening, interview scheduling, and even the “onboarding” paperwork.

E. Insurance agents are becoming autonomous, processing simple claims and detecting fraud patterns through visual AI.

E. Collaborative Intelligence: The Human-AI Team

The goal of Agentic AI is not necessarily total replacement, but rather “Augmentation.” This creates a new workflow called “Human-AI Teaming,” where both parties play to their strengths.

Humans provide the emotional intelligence, ethics, and high-level strategy, while the agents handle the data processing and repetitive execution. This collaboration allows small teams to accomplish tasks that previously required large corporations.

A. Emotional Intelligence remains a human-only trait, essential for client relations and high-stakes negotiations.

B. Ethical Guardrails are set by humans to ensure that autonomous agents don’t make biased or harmful decisions.

C. Strategy Definition is the human’s primary role, deciding what should be done rather than how to do it.

D. Agents act as “Force Multipliers,” taking a single human’s vision and executing it across thousands of channels.

E. Hybrid Workflows involve a constant “hand-off” between human intuition and machine-scale precision.

F. The Rise of Industry-Specific Agent Models

We are moving away from “General AI” that tries to do everything toward “Domain-Specific Agents” that are experts in one field. A medical agent is trained on different data and follows different safety protocols than a creative writing agent.

These specialized agents are often smaller and faster because they don’t carry the “dead weight” of irrelevant knowledge. They are fine-tuned for the specific jargon and requirements of their particular industry.

A. Medical Agents assist doctors by cross-referencing patient symptoms with the latest global research and clinical trials.

B. Coding Agents act as “Pair Programmers,” writing, testing, and debugging software modules autonomously.

C. Creative Agents help designers by generating thousands of variations of a logo or UI based on specific brand guidelines.

D. Manufacturing Agents optimize supply chains by predicting part shortages and autonomously ordering replacements.

E. Educational Agents provide “Tutor-on-Demand” services, adapting their teaching style to each student’s specific learning pace.

G. Security and the “Agentic Attack Surface”

As we give AI the power to take actions, we also create new security risks. An agent that has the power to send emails or move money can be a dangerous tool if it is compromised by a malicious actor.

“Agent Hijacking” is a new concern where hackers provide prompts that trick an agent into ignoring its safety protocols. Securing these autonomous systems is the number one priority for cybersecurity firms in 2026.

A. Sandboxing ensures that an agent can only access the specific files and software it needs for its current task.

B. Human-in-the-Loop (HITL) triggers require a physical person to click “Approve” before an agent can spend money or delete files.

C. Audit Logs provide a complete “paper trail” of every action an agent took and the reasoning behind those actions.

D. Adversarial Testing involves trying to “break” an agent’s logic to find vulnerabilities before it is deployed.

E. Decentralized Identity (DID) is being used to give agents their own digital credentials so their actions can be verified.

H. The Economics of the Autonomous Workforce

The widespread adoption of Agentic AI is fundamentally altering the cost of labor. When a digital agent costs a fraction of a human salary and works 24/7, the competitive landscape for businesses changes.

This is leading to a “Deflationary” effect on services, where the cost of high-quality professional work is dropping. While this is great for consumers, it forces human workers to find new ways to provide value that machines cannot replicate.

A. The “Marginal Cost of Intelligence” is dropping toward zero, making advanced analysis accessible to everyone.

B. Small Businesses are using agents to compete with giant corporations that have thousands of employees.

C. “Solo-preneurs” are becoming common, as one person can lead a company supported by fifty autonomous agents.

D. Wage Stagnation in repetitive white-collar roles is a growing concern as agents become “good enough” for most tasks.

E. The “Expertise Premium” is shifting toward those who know how to build, manage, and audit AI agents effectively.

I. Communication: When Agents Talk to Agents

One of the most fascinating trends in 2026 is the development of agent-to-agent communication protocols. In this future, your personal AI agent talks to a restaurant’s AI agent to book a table.

They negotiate the time, account for your dietary restrictions, and settle the payment without either human ever picking up a phone. This “Agentic Layer” of the internet is becoming a new marketplace for services.

A. Standardized Protocols like “Agent Markup Language” are being developed to help different AIs understand each other.

B. Automated Negotiation allows agents to find the best price for services by talking to hundreds of vendors in seconds.

C. Conflict Resolution between agents occurs through “Digital Arbitrators” that decide whose logic should prevail.

D. Personal Privacy is a major hurdle, as your agent must know your secrets but not share them with the vendor’s agent.

E. The “Invisible Web” refers to the massive amount of traffic generated by agents talking to each other behind the scenes.

J. The Psychological Shift in the Modern Office

Working alongside an autonomous agent requires a mental adjustment for human employees. It can be unsettling to see a piece of software making decisions that were previously the domain of senior staff.

Management styles are evolving to focus on “Algorithmic Oversight” rather than traditional task management. This creates a more “Director-style” environment where humans focus on the “Big Picture” while machines handle the details.

A. Trust Calibration is the process of learning when to trust an agent’s output and when to double-check it.

B. “AI Envy” is a new workplace phenomenon where employees feel threatened by the efficiency of digital coworkers.

C. Upskilling is no longer about learning a specific tool, but about learning the logic of “Agentic Management.”

D. Workplace Culture is being redefined to include “Digital Colleagues” in the company’s social and professional fabric.

E. Job Satisfaction is increasing for many as the most boring, repetitive parts of their day are handed off to agents.

K. Ethical Dilemmas of Autonomous Decision Making

Who is responsible when an autonomous agent makes a mistake that loses a million dollars? This “Liability Gap” is one of the biggest legal and ethical questions of our time.

If an AI makes a decision that is technically correct but morally questionable, we have to look at the “Objective Function” it was given. We are learning that how we define a “Goal” for an agent is a high-stakes ethical act.

A. Bias in Goal Setting occurs when an agent is told to “maximize profit” without being told to “act ethically.”

B. The “Alignment Problem” refers to the difficulty of ensuring an AI’s goals perfectly match human values.

C. Transparency is required so that anyone affected by an AI’s decision can see the “Why” behind the action.

D. Universal Basic Income (UBI) is being discussed more seriously as autonomous agents displace more workers.

E. The “Right to a Human” is becoming a legal standard, ensuring people can always talk to a person in critical situations.

L. The Future: A World of Personal AI Legions

By 2027, the concept of a “Digital Assistant” will seem ancient. Most individuals will have a “Personal Legion” of specialized agents that manage their finances, health, education, and career.

This will lead to an era of hyper-productivity, but also a world where the “Digital Divide” becomes a gap between those who have agents and those who don’t. The ultimate goal is a future where AI removes the “Friction of Life,” allowing humans to focus on creativity, connection, and purpose.

A. Personal Data Sovereignty will be the most important political issue as agents become our primary interfaces with the world.

B. “Agentic Literacy” will be the most important skill taught in schools, replacing traditional rote memorization.

C. The “Post-Work Economy” is a theoretical future where most essential labor is performed by autonomous agents.

D. Emotional AI Agents will provide mental health support and companionship, further blurring the line between human and machine.

E. The “Singularity” might not be one giant AI, but a massive web of billions of small, interconnected agents.

Conclusion

The transition from passive assistants to agentic coworkers is the most significant change in the history of labor.

We are no longer just using software but are instead leading teams of autonomous digital entities.

This technology allows us to scale our human potential far beyond the limits of our own time and energy.

However, the rise of agentic systems brings with it profound risks to our security and our economic structures.

We must build robust ethical and legal frameworks to ensure these agents act in our best interests.

Security will remain a constant battle as hackers try to weaponize the autonomy of these digital agents.

The professional services industry will be the first to be completely redefined by this new machine logic.

Humans will find their greatest value in strategy, empathy, and the management of these complex systems.

The speed of innovation in this field means that the office of 2030 will look nothing like the office of 2020.

Education must pivot immediately to teach the next generation how to collaborate with autonomous agents.

Every business, large or small, must develop an “Agentic Strategy” to survive in the new digital economy.

The future is not just about smarter machines but about a more powerful partnership between humans and AI.